Mood Boarding & Flythroughs

Current models like Runway Gen-2 excel at generating short (5–10 second) clips that convey atmosphere, lighting, and mood. These are ideal for "mood boarding"—demonstrating how fog or light interacts with texture. [29]

Additionally, AI is proficient at creating simple "flythrough" animations from static renders, introducing depth and parallax to otherwise still images. [30]

World-Consistent Diffusion

The 2025 breakthrough is 3D Consistency. Models like GEN3C and Voyager utilize a "3D cache" to ensure objects remain stable as the camera moves, preventing doors from morphing into windows. [31]

Newer techniques like JOG3R ("unified video generation and camera pose estimation") are solving the "boiling" texture effect, producing stable video that mimics recorded reality rather than dream logic. [33]

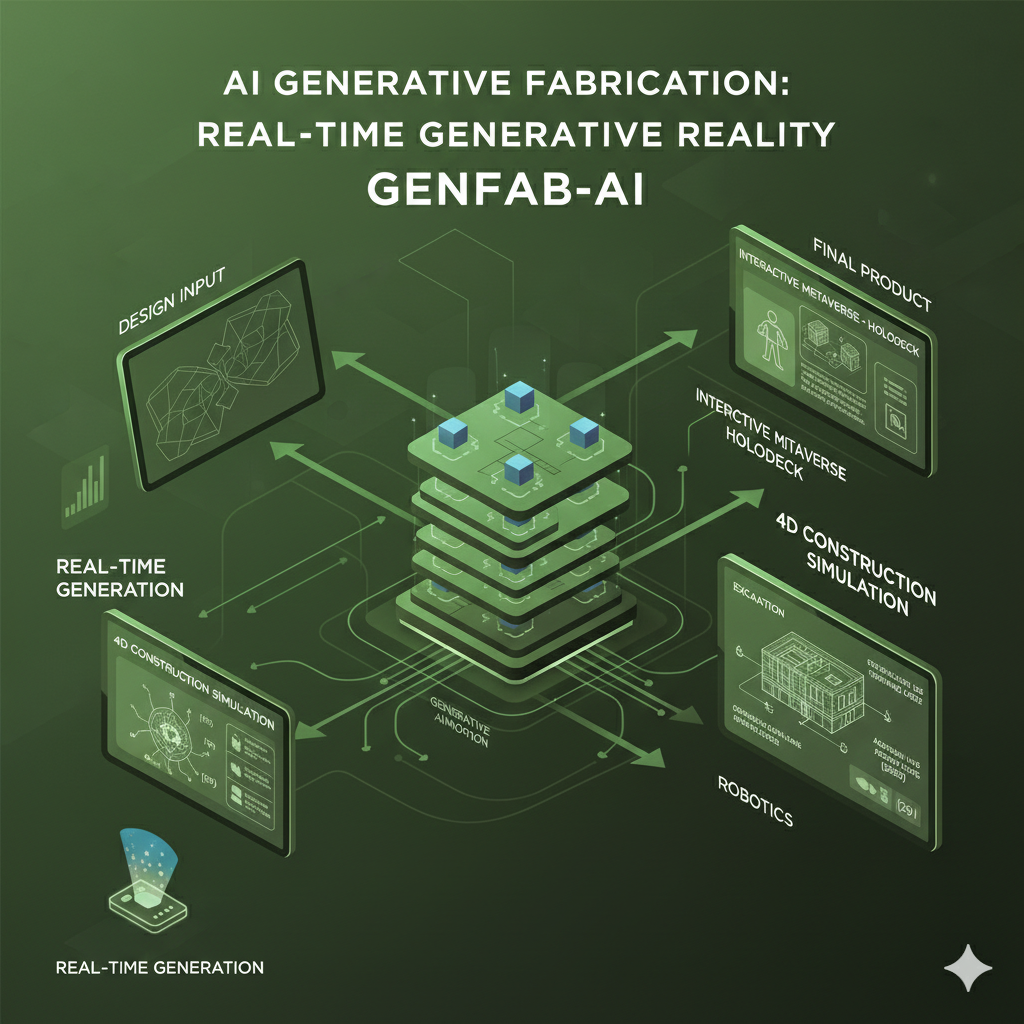

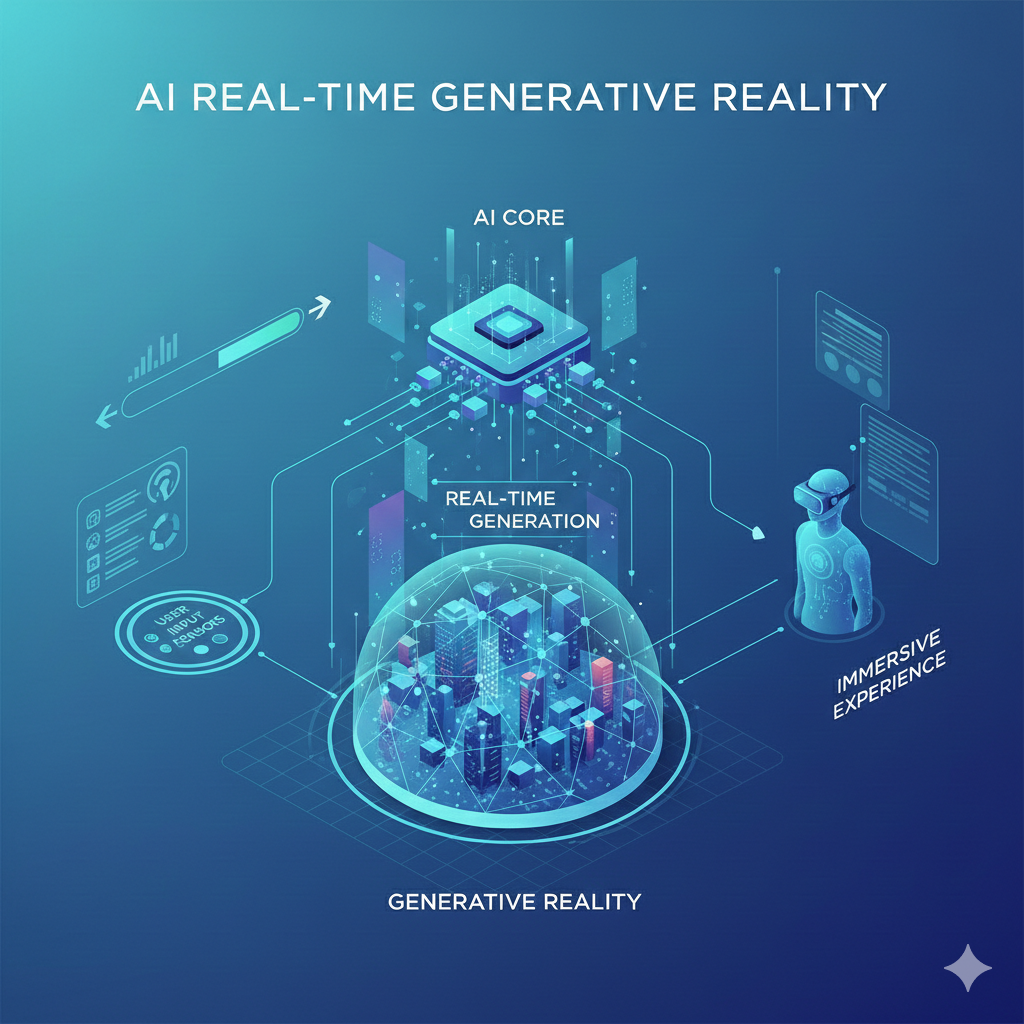

Real-Time Generative Reality

The theoretical endpoint is the "Interactive Metaverse" or "Holodeck"—a system where physics, light, and geometry are generated in real-time as the user explores, rather than being pre-rendered.

Furthermore, 4D Construction Simulation could allow AI to "hallucinate" an entire construction sequence—from excavation to topping out—by understanding the logic of assembly. [29]

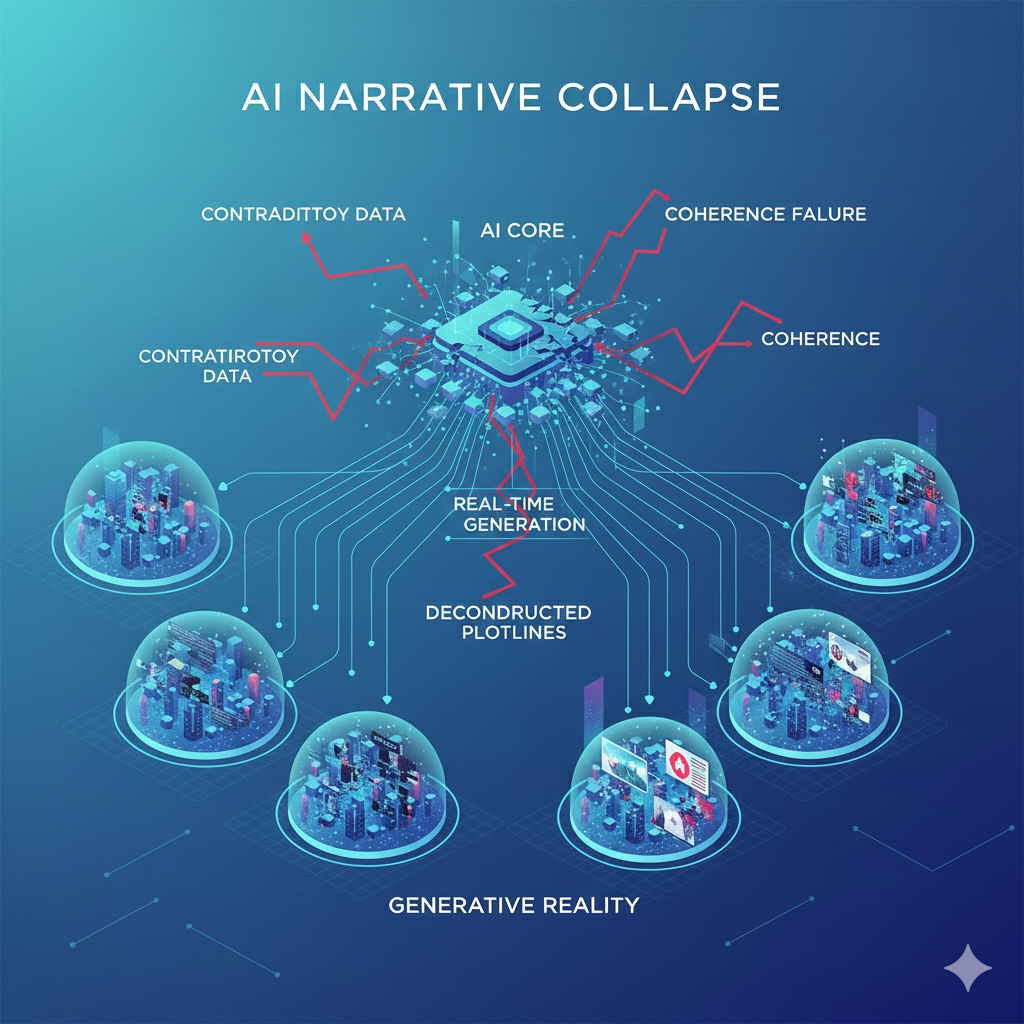

Narrative Collapse

AI struggles with videos longer than 16 seconds. In long-form content, spatial layouts shift—corridors change length, and doors vanish, leading to a breakdown in narrative coherence. [29]

Moreover, generated video is "pixel-deep," not "vector-deep." It simulates the appearance of 3D space without creating a constructible model. You cannot (yet) export a building from Sora into Revit. [8]